I spent most of last weekend explaining to a friend why his RAG bot keeps confidently quoting paragraphs that don’t exist in his docs. Two hours in, we figured out the answer was the same answer it always is: his chunks are too big, the wrong shape, and his retriever was returning a slab of unrelated boilerplate that the model dutifully treated as gospel. Chunking is the most boring part of building a retrieval system and the part most people get wrong, including me, repeatedly, for about a year.

This is the post I wish I’d had two years ago. It’s not a survey of every chunking strategy on the internet. It’s the four I actually keep using, the two I tried and dropped, and the order I’d do things in if I were starting over today.

Chunk size is the wrong knob to turn first

Every RAG tutorial opens with “pick a chunk size of 500 tokens with 50 overlap.” That’s a fine default and it is not the thing that’s making your retrieval bad. The thing making your retrieval bad is almost always one of:

- Your chunks ignore document structure (you split a table down the middle).

- Your chunks have no context (chunk 47 of a contract has no idea it’s about indemnification).

- Your retriever returns one chunk when the answer needs three.

- Your embeddings are choking on the boilerplate that wraps your real content.

I’ve watched teams spend two weeks A/B-testing chunk sizes between 384 and 1024 tokens and find no significant difference, then in one afternoon get a 30-point jump in recall by switching from naive token-splitting to splitting on document headings. The chunk-size-first instinct is the wrong instinct. Start with what you’re splitting on, not how big the pieces are.

What I actually use: structure-aware splitting

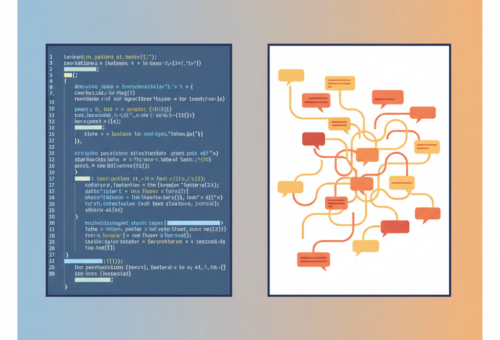

For any document with real structure (Markdown, HTML, code, anything with headings or sections), I split on the structure first and then re-split anything still too long. The LangChain text splitters reference calls this hierarchical splitting and the implementation is basically: try \n##, fall back to \n\n, fall back to \n, fall back to .. I built my own tiny version because LangChain pulls in too many dependencies for a single-purpose script:

import re

SEPS = ["\n## ", "\n### ", "\n\n", "\n", ". "]

def split(text: str, max_tokens: int = 512) -> list[str]:

if count_tokens(text) <= max_tokens:

return [text.strip()]

for sep in SEPS:

if sep in text:

parts = text.split(sep)

chunks = []

buf = parts[0]

for p in parts[1:]:

cand = buf + sep + p

if count_tokens(cand) > max_tokens:

chunks.extend(split(buf, max_tokens))

buf = p

else:

buf = cand

chunks.extend(split(buf, max_tokens))

return [c.strip() for c in chunks if c.strip()]

# Fallback: hard split by tokens

return [text[i:i+max_tokens*4] for i in range(0, len(text), max_tokens*4)]

The key thing this gets right that token-by-token splitting gets wrong: a chunk almost always corresponds to a section the author wrote on purpose. Recall improves because the embedding for “how to configure SSO” actually looks like a SSO configuration section, not a SSO sentence stuck to half a paragraph about API keys.

What I actually use: prepend the parent context

This one is the single highest-leverage change I have ever made to a RAG pipeline. It’s also so simple it feels like cheating.

For every chunk, prepend a short header that names where it lives in the document. Something like:

Document: Engineering Onboarding Guide

Section: SSO Setup > Okta

[chunk text here]

That’s it. The embedding now encodes both the local content and the global location, which means a query like “how do I add a new SAML provider” matches the right chunk even when the chunk text itself never says “SAML.” Anthropic’s contextual retrieval write-up takes this to its logical extreme. They generate a one-sentence context summary per chunk via an LLM call before embedding, and report a 49% reduction in retrieval failures. I’ve seen something in the same ballpark on internal benchmarks. If you only do one thing on this list, do this one.

The tradeoff: an extra LLM call per chunk at indexing time. For a static corpus that’s fine, you index once. For a corpus that updates hourly, you’ll want to cache aggressively or use the cheaper “just prepend the heading path” version, which captures most of the win for free.

What I actually use: separate chunks for tables and code

I have lost real money to RAG systems that split a pricing table down the middle. Tables and code blocks have to be treated as atomic units. My splitter sees a table or fenced code block, pulls it out as its own chunk regardless of size, and replaces it with a placeholder in the surrounding prose. If a table is genuinely huge (think 500-row CSV), I summarize it via an LLM call into a one-paragraph description and store both: the description gets embedded and indexed, the raw table is what the model sees at retrieval time.

This pairs nicely with the structured outputs work I wrote about in my post on getting Claude to stop rambling and cut output tokens by 75%. Once your chunks are clean, your model’s outputs get clean too, because it isn’t trying to reformat broken markdown on the fly.

What I actually use: hybrid retrieval before I touch chunking again

If your retrieval is bad, the next thing to fix after chunk shape is your retriever, not your chunks. Pure dense embedding retrieval misses keyword matches that BM25 catches in its sleep. The combination (BM25 plus embeddings, fused with reciprocal rank fusion) is the default I now reach for, and it has been the production default at Vespa, Pinecone, and most of the RAG-as-a-service vendors for a couple of years. The Pinecone team’s hybrid search post walks through the basics and is still the clearest writeup I’ve found.

The order I follow when retrieval is bad:

- Look at what your retriever is returning for ten real queries. Most of the time the answer is obvious from the top-3.

- Try hybrid retrieval (BM25 + embeddings + RRF).

- Try contextual prepending on chunks.

- Then think about chunk size.

I know teams that are step-4 deep before they’ve done step 1. Don’t be that team.

What I tried and dropped: semantic chunking

For about three months I was sold on semantic chunking. The idea: embed every sentence, then split where the cosine similarity between consecutive sentences drops below a threshold. It sounds elegant. The pitch is that you split on “meaning shifts” instead of arbitrary token counts.

In practice it gave me chunks of wildly varying size, was 50x slower to index, and on my benchmarks didn’t beat structure-aware splitting on a single corpus. The one place I’d still consider it is for transcripts or other unstructured text without headings, where semantic splitting is genuinely better than blind paragraph splitting. For everything else, you already have structure, you just have to use it.

What I tried and dropped: small-to-big retrieval

The “sentence window” or “small-to-big” pattern: embed individual sentences, but at retrieval time return the full paragraph each sentence came from. The theory is that you get precise embeddings and rich context.

The practice: my contexts ballooned, my prompt costs doubled, and I was sometimes pulling 4KB of mostly-irrelevant surrounding paragraph for one matched sentence. Combined with the contextual-prepend trick from earlier, plain paragraph-sized chunks did better than small-to-big in every test I ran. Your mileage may vary, especially on FAQ-shaped content where each sentence actually is a self-contained answer.

A concrete starting point you can use this week

If you’re standing up a new RAG pipeline and you don’t want to think about this for the next month, start here:

- Split on document structure (headings, then paragraphs, then sentences as a fallback).

- Target 400-600 tokens per chunk, hard cap at 1000.

- Tables and code blocks are atomic.

- Prepend

Document: X\nSection: Y > Z\n\nto every chunk before embedding. - Use BM25 + dense embeddings + RRF for retrieval.

- Re-rank the top 20 with a cross-encoder, return the top 5.

- Measure retrieval quality on 50 real queries with human-graded ground truth before you change anything.

That is approximately what I run in production and it is good enough that the bottleneck moves from retrieval back to the model’s actual reasoning, which is where you want it. I keep the small RAG eval harness I built for this on my project portfolio — it’s a single Python script, no frameworks, and it has saved me from at least three bad chunking decisions.

The meta-lesson is that RAG quality is mostly about respecting the structure of the documents you actually have, not about clever ML. Get the boring stuff right and the system mostly works. Skip it and no chunk size in the world will save you.