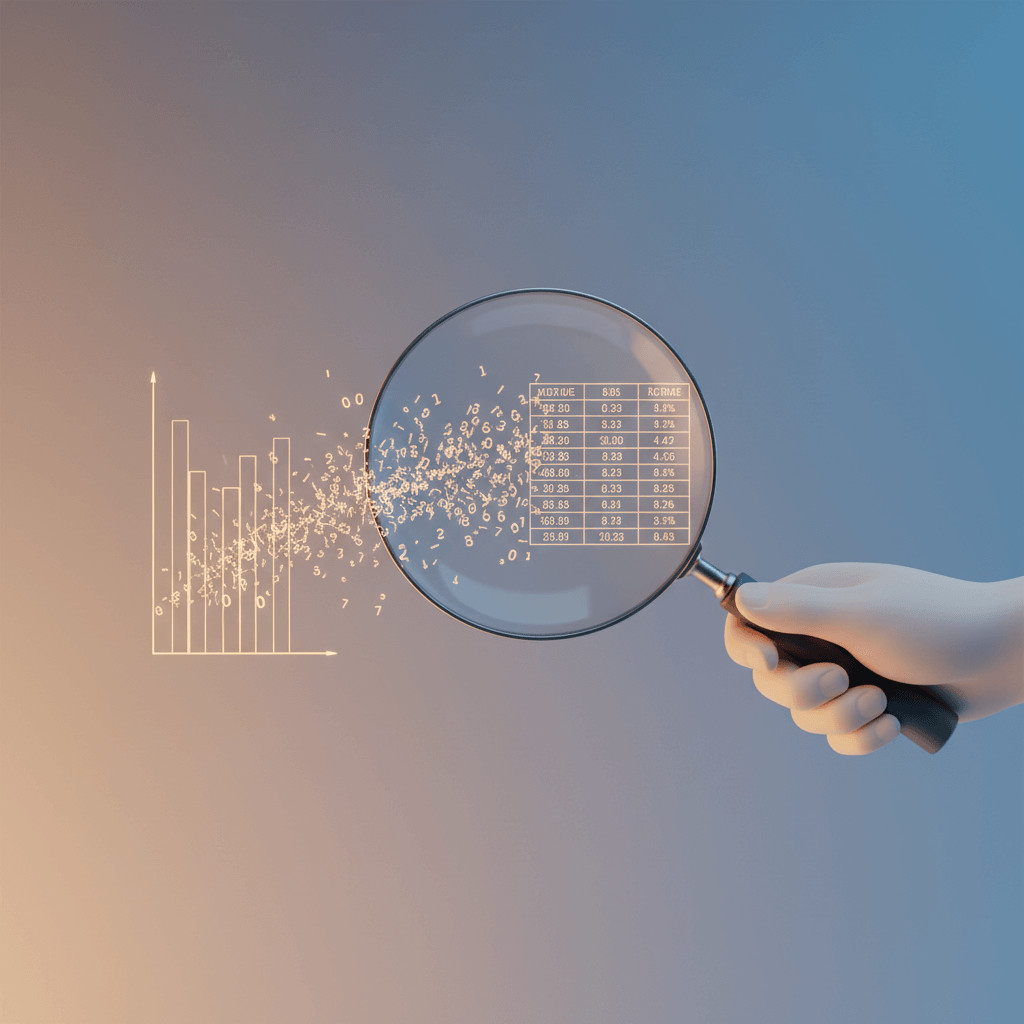

I spent most of last Wednesday debugging a data pipeline that feeds financial time series into Claude. 500 rows of daily closing prices, nothing exotic. The model kept hallucinating trends that weren’t there, confidently telling me March was a down month when it was clearly up. The numbers were right there in the prompt. It just couldn’t read them properly.

Turns out this is a well-documented failure mode, and a new paper from researchers at multiple universities explains exactly why it happens. They also offer a fix so simple I’m a little annoyed nobody tried it sooner.

The context window is a ceiling, not a guarantee

Every model now advertises its context window like a selling point. 128K tokens. 200K. A million. The implication is that you can dump a huge document in there and the model will handle it. For prose, that’s roughly true. For numbers, it falls apart fast.

The problem isn’t that the model runs out of space. It’s that the attention mechanism that actually processes the tokens gets stretched too thin. When you feed in a long sequence of numbers, every token looks kind of similar to every other token. There’s no semantic structure for the model to latch onto. “142.50” and “143.20” and “141.80” are all just… numbers. The attention weights spread out uniformly instead of concentrating on the tokens that matter.

The researchers call this “attention dispersion in the Softmax mechanism,” which is a fancy way of saying the model’s focus goes blurry when it stares at too many numbers.

What SepSeq actually does

The fix is called SepSeq (Separate Sequence), and the core idea is embarrassingly simple: insert separator tokens between chunks of your numerical data.

That’s it. No fine-tuning, no new architecture, no training run. You take your long sequence of numbers, break it into segments, and put separator tokens between them. The separators act as what the paper calls “attention sinks” – they absorb the excess attention that would otherwise get smeared across the whole sequence. This lets the model focus on local segments while still keeping track of the global context.

Think of it like adding paragraph breaks to a wall of text. The information is the same, but the structure makes it readable.

The numbers are hard to argue with

The researchers tested SepSeq across nine different LLMs, which is a decent spread. The results: an average relative accuracy improvement of 35.6% across different tasks involving long numerical sequences. And here’s the part that surprised me – it also reduced total inference token consumption by 16.4% on average. So it’s not just more accurate, it’s cheaper to run.

I want to be careful here because “35.6% relative improvement” can mean different things depending on the baseline. If a model was at 40% accuracy and went to 54%, that’s a 35% relative jump but still not great in absolute terms. The paper tests across diverse domains though, and the improvements hold pretty consistently. It’s not a fluke of one particular benchmark.

Why this matters if you’re building anything with numbers

If you’re feeding LLMs tabular data, time series, financial figures, sensor readings, or really any sequence of numbers longer than a few dozen values, you’re probably hitting this problem without realizing it. The model won’t tell you it’s confused. It’ll just confidently give you a wrong answer, which is the worst kind of failure mode.

I’ve been experimenting with a simple version of this in my own pipelines since reading the paper. Instead of dumping a flat CSV into the prompt, I break it into weekly chunks with clear headers. Something like:

--- Week of March 3 ---

Mon: 142.50

Tue: 143.20

Wed: 141.80

Thu: 144.10

Fri: 143.90

--- Week of March 10 ---

Mon: 145.20

...

It’s not exactly what the paper prescribes, but the principle is the same: give the attention mechanism structure to work with. Anecdotally, the outputs got noticeably better. I haven’t run rigorous evals on it yet, which I wrote about how to actually measure when doing LLM reasoning work, but the hallucinated trends mostly went away.

The bigger picture for attention research

SepSeq isn’t the first paper to identify attention dispersion as a problem. The “attention sink” phenomenon has been documented before, notably in StreamingLLM from Meta, where researchers found that initial tokens in a sequence absorb disproportionate attention regardless of their content. SepSeq builds on that insight but applies it in a targeted, practical way.

What I find interesting is that this is a training-free intervention. You don’t need access to model weights. You don’t need a GPU cluster. You just need to restructure your input. That makes it immediately useful for anyone working with API-based models where you can’t touch the internals.

This is the kind of applied research I like seeing more of. Not “we trained a bigger model” but “we figured out how to use existing models better with a simple trick.” It’s the difference between buying a faster car and learning to drive the one you have. I do a lot of this kind of practical optimization in my consulting work and the low-hanging fruit is almost always in how you structure the input, not which model you pick.

What you can try this week

If you’re working with numerical data and LLMs, here’s what I’d actually do:

First, check whether your current setup is affected. Take a prompt with a long numerical sequence and ask the model to identify specific values or trends. Compare its answers against ground truth. If it’s getting things wrong, attention dispersion is a likely culprit.

Second, restructure your numerical inputs with explicit separators. Break long sequences into logical chunks. Use headers, blank lines, or delimiter tokens between groups. The exact separator matters less than having one.

Third, don’t trust the context window number on the tin. A model with a 128K context window processing 5,000 numbers is not the same as that model processing 5,000 words of prose. The effective capacity for numerical data is much lower unless you help the attention mechanism out.

The paper is worth reading in full if you’re deep into this stuff. For everyone else, the takeaway is simple: your LLM’s context window has a blind spot for numbers, and adding structure to your numerical inputs is a free fix.