Confession: I rewrote my first Laravel AI feature three times before I got it right. The first version was raw curl calls to the OpenAI API, glued together with a service class I was secretly proud of. It worked until it didn’t. The second version was a popular package I won’t name because the maintainers seem like nice people. It worked until it changed providers. The third version is what I actually run in production, and it’s been quiet for six months, which is the highest praise I can give software.

I’m writing this because every Laravel developer I know is being asked the same question: “can we add AI to this?” The honest answer is yes, easily, but the path you pick matters more than the model you pick. Here’s the stack I reach for, the package I bailed on, and the parts I think are still mostly hype.

The cheap path: raw OpenAI client, and why I bailed on it

If you just want to ship a single AI feature, the official OpenAI PHP client for Laravel is the obvious choice. It’s well maintained, the API is clean, and you can be calling GPT in about ten minutes.

use OpenAI\Laravel\Facades\OpenAI;

$response = OpenAI::chat()->create([

'model' => 'gpt-4o-mini',

'messages' => [

['role' => 'system', 'content' => 'You summarize support tickets.'],

['role' => 'user', 'content' => $ticket->body],

],

]);

return $response->choices[0]->message->content;

That’s it. No abstraction layer, just the API call.

Here’s where I bailed: as soon as I had three AI features in the same app, I needed retries, timeouts, structured output, fallback to a different provider when OpenAI was rate-limited, and a way to log every prompt and response without sprinkling that logic everywhere. I tried to build that myself. It got ugly fast.

The OpenAI client is great when you have one or two calls. By the time you have five, you’re writing a framework on top of it and pretending you’re not.

Prism: the Laravel-native approach I keep coming back to

Prism is the package I actually run now. It’s not flashy. It abstracts the provider (OpenAI, Anthropic, Gemini, Ollama) behind a Laravel-shaped API, and it handles the boring work for you: retries, timeouts, structured output, tool calling. You stop thinking about it after a week.

Here’s the same support-ticket summarizer in Prism:

use Prism\Prism\Prism;

use Prism\Prism\Enums\Provider;

$response = Prism::text()

->using(Provider::OpenAI, 'gpt-4o-mini')

->withSystemPrompt('You summarize support tickets.')

->withPrompt($ticket->body)

->asText();

return $response->text;

A bit longer, and worth it. The reason: when GPT-5-mini becomes the cheaper option in three months, I change one line. When my finance team asks me to switch to a self-hosted Llama for compliance reasons, I change one line. The first time I had to do that with the raw client, it was a two-day refactor across twelve files. With Prism it took me an afternoon.

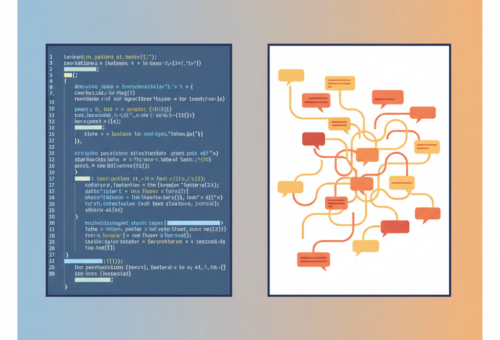

The structured output helper is the part I was missing for years:

use Prism\Prism\Schema\ObjectSchema;

use Prism\Prism\Schema\StringSchema;

$schema = new ObjectSchema(

name: 'ticket_summary',

description: 'A structured ticket summary',

properties: [

new StringSchema('category', 'One of: billing, bug, feature, other'),

new StringSchema('summary', 'One sentence summary'),

new StringSchema('priority', 'low, medium, high'),

],

requiredFields: ['category', 'summary', 'priority'],

);

$response = Prism::structured()

->using(Provider::OpenAI, 'gpt-4o-mini')

->withSchema($schema)

->withPrompt($ticket->body)

->asStructured();

$ticket->update($response->structured);

I used to parse JSON out of the model’s text response with a regex and pray. Now I get a typed array back and the model is forced to fit the schema. The reduction in 2am pages has been measurable.

Streaming responses without breaking your blade templates

The first time I tried to stream a chat response in a Livewire component, I made a mess. I was server-side rendering, then trying to push tokens through Livewire’s event bus, and the latency was worse than just waiting for the full response.

What actually works: a controller that streams Server-Sent Events, plus a small chunk of vanilla JS on the front end. Prism makes this simple:

public function stream(Request $request)

{

return response()->stream(function () use ($request) {

$response = Prism::text()

->using(Provider::OpenAI, 'gpt-4o-mini')

->withPrompt($request->input('message'))

->asStream();

foreach ($response as $chunk) {

echo "data: " . json_encode(['text' => $chunk->text]) . "\n\n";

ob_flush();

flush();

}

}, 200, [

'Content-Type' => 'text/event-stream',

'X-Accel-Buffering' => 'no',

]);

}

If you’re behind Nginx, the X-Accel-Buffering: no header is the thing I forgot for two days. Without it, Nginx buffers the whole response and your “streaming” UI sits there for ten seconds before dumping everything at once. I’m telling you this so you don’t have my Tuesday.

For the front end, EventSource does what you want with about fifteen lines of JS. I tried React, then Livewire, then Alpine. Plain EventSource won. Sometimes the boring choice is the right one. I went on about this same theme in my Laravel 12 release post if you’re curious.

Background jobs, queues, and the part that actually matters

Here’s the thing nobody warns you about: the moment you have AI in your app, you have a latency problem. A fast OpenAI call is 800ms. A slow one is 30 seconds. Either way, it’s not something you want in a request thread.

So everything goes through Laravel’s queue:

class SummarizeTicket implements ShouldQueue

{

use Queueable;

public int $tries = 3;

public int $backoff = 30;

public int $timeout = 60;

public function __construct(public int $ticketId) {}

public function handle(): void

{

$ticket = Ticket::findOrFail($this->ticketId);

$response = Prism::structured()

->using(Provider::OpenAI, 'gpt-4o-mini')

->withSchema($this->schema())

->withPrompt($ticket->body)

->asStructured();

$ticket->update([

'ai_summary' => $response->structured['summary'],

'ai_category' => $response->structured['category'],

]);

}

}

A few things I had to learn the hard way.

The default queue timeout in Laravel is 60 seconds. AI calls can blow past that on bad days, so set the timeout explicitly per job rather than relying on the global default.

Retries with exponential backoff are not optional. OpenAI rate-limits will hit you on launch day, and you want jobs to come back five minutes later, not fail loudly. The $tries and $backoff properties handle this.

Use a separate queue for AI work. If your password-reset emails sit behind a 30-second AI summarizer, the support tickets win. I run an ai queue with its own worker count, and the rest of the app stays snappy. The Laravel queues documentation covers this in more detail than you’d expect.

What’s still mostly hype

A few things I keep getting asked about that I’d skip in a Laravel app today.

Vector search inside Laravel. I see packages that promise pgvector integration with Eloquent magic. They work, but if you have a real RAG use case, you should be running your retrieval layer outside the request cycle anyway. A small Python service or a managed vector DB is less coupled and easier to debug. I wrote up the same instinct on the JS side in my note on moving off raw OpenAI calls in the Vercel AI SDK, and the same logic applies in Laravel.

Agents inside Laravel controllers. No. Agents call tools, tools call APIs, APIs are slow, and your request times out. If you need agent-style behavior, run it as a queued job that pushes results back via broadcasting or a webhook. Trying to make a synchronous agent loop inside a controller is how you get a 504 on a feature demo.

The “just train your own” pitch. I’ve yet to see a Laravel shop where fine-tuning beat a good prompt with retrieval, and the maintenance cost is not zero. By the time you’ve collected enough labeled data to fine-tune, the base models have improved twice. Your time is better spent on the prompts and the data pipeline.

The minimal stack I’d start with today

If I were starting a Laravel app this week and I needed AI in it, here’s what I’d reach for: Prism for the model layer, structured output for anything that goes back into the database, the existing queue worker for everything that takes more than a second, and a separate ai queue so nothing else gets blocked. That’s the whole list. No agent framework, no vector DB, no fine-tuning. You can add those later when the actual product tells you what you need.

The thing I want you to take away: the AI layer is not the interesting part of your app. The interesting part is your data, your users, and the messy rules of how your business actually works. The AI is a tool. Like any tool, the goal is to pick something boring enough that you stop thinking about it. If you’re spending more time on the AI plumbing than on the feature it’s powering, you’ve picked the wrong stack. (I keep a few more of these “what I actually run” stack pieces over on my portfolio if you want the full set.)

This week, try this: take one feature in your Laravel app that does string parsing, anything that reads user input and tries to bucket it, and replace it with a structured Prism call inside a queued job. Measure the latency. Measure the accuracy. Decide if it’s worth keeping. That’s the experiment that’ll teach you more than any blog post will.