AirLLM is a Python library that lets you run huge language models, including 70B parameter beasts like Llama 2, on machines with as little as 4GB of RAM. It pulls this off through layer-by-layer inference. Instead of loading the whole model into memory, it loads one chunk at a time, runs the math, swaps it out, then grabs the next chunk. Pair that with 4-bit quantization and disk offloading, and you technically have a setup that runs a 70B model on a regular laptop.

And that is about where the good news ends.

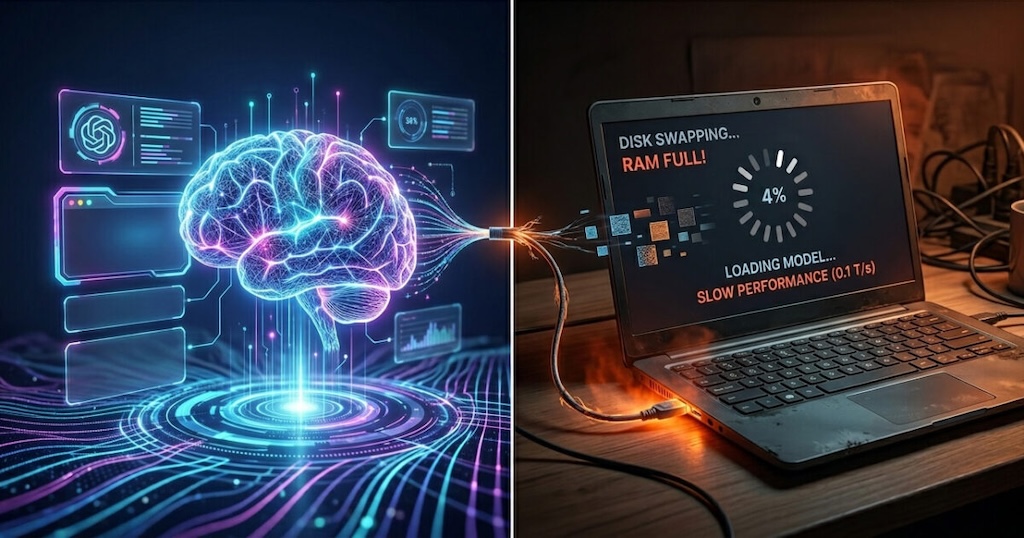

If you have seen influencers on YouTube or TikTok claiming that AirLLM kills the need for ChatGPT Plus, the Claude API, or any paid AI tooling, you are being sold a fantasy. Yes, it works. No, you will not enjoy using it. A single response from a 70B model on a 4 to 16GB machine can take 15 to 30 minutes, sometimes longer. Your CPU will be pinned, your fans will spin up, and your system may freeze if you try to do anything else while it runs. Disk I/O becomes the bottleneck, and your SSD gets hammered every time a new layer loads.

The trade-off is simple. You save money by losing usability. API calls cost pennies and return answers in seconds. AirLLM costs nothing and returns answers after your coffee has gone cold.

For production use, this is not a real option. Cloud inference through OpenAI, Anthropic, or hosted open-source endpoints still wins on speed and reliability. Even a smaller optimized model run locally, like a quantized 7B or 13B through Ollama or llama.cpp, will give you a much better experience than pushing a 70B model through AirLLM.

So who is AirLLM actually for? Researchers testing ideas on a shoestring. Hobbyists who enjoy tinkering. Students curious about how large models behave. It is a proof of concept, not a replacement for proper infrastructure. Treat the influencer pitch with skepticism and set your expectations accordingly.